Shannon's Communication Theory

Contents

A First Look at Shannon's Communication Theory

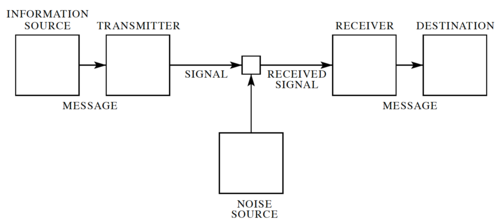

In his landmark 1948 paper[1], Claude Shannon developed a general model for communication systems, as well as a framework for analyzing these systems. The model has three components: (1) the sender or source, (2) the channel, and (3) the receiver or sink. The model also includes the transmitter that encodes the message into a signal, the receiver, for decoding the signal back into a message, as well the noise of the channel, as shown in Fig. 1.

In Shannon's discrete model, the source provides a stream of symbols from a finite alphabet, , which are then encoded. The code is sent through the channel, which could be corrupted by noise, and when the code reaches the other end, it is decoded by the receiver, and then the sink extracts information from the steam of symbols.

Note that sending information in space, e.g. from here to there is equivalent sending information in time, e.g. from now to then. Thus, Shannon's theory applies to both information transmission and information storage.

Shannon's Noiseless Coding Theorem

One very important question to ask is: "How efficiently can we encode the information that we want to send through the channel?" To answer this, let us assume: (1) the channnel is noiseless, and (2) the receiver can accurately decode the symbols transmitted through the channel. First, let us define a few things.

Let be the alphabet of the channel, or in other words, the set of symbols that can be sent through the channel. Thus, encoding the source alphabet can be expressed as a function, , where is the set of all possible finite strings of symbols from .

Let where , i.e. the length of the string of channel symbols encoding the symbol .

The Kraft-McMillan Inequality

A code with lengths is uniquely decodable (UD) if and only if:

-

(1)

-

To outline the proof, let's first evaluate :

-

(2)

-

Let . Thus, the minimum value of is , when all the codewords are 1 bit long, and the maximum is , when all the codewords have the maximum length.