Channeling your inner capacity

Contents

Channel Basics

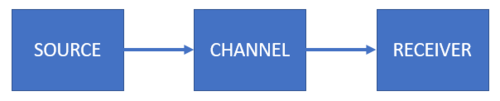

When Shannon developed his theory of information, it was in the context of improving communication. His goal was to determine if there was a way to maximize the transmission rates over some noisy channel. In this module, we will learn more about the channel and some formal terminologies that we will use in the succeeding modules. The GIF below recalls the story of Bob and Alice. Bob wants to send a message to Alice across some channel. Let's simplify the scenario. Let's say Bob sends a love letter to Alice through some wireless (satellite based) channel.

Figure 2 shows a simplified model of their communication. Bob is the source. Sometimes we use the term sender or transmitter for the source. The medium where the message goes through is the channel (in this case, the wireless channel). Alice is the receiver who probably gets the correct message at the other end of the channel. Let's take a look at each component formally.

The Source

The source is the component that produces messages or objects sent over a channel. We represent the source as a random variable which contains the outcomes and each outcome has its own probability distribution . The random variable is also called the source alphabet and the outcomes are the symbols of the source alphabet. A combinational sequence of symbols forms the message that travels through the channel. Consider the following examples:

- We can let the source alphabet be the English alphabet where the symbols are all the letters from a to z including the space. Suppose we want to send the message "the quick brown fox jumps over the lazy dog". All letters of the English alphabet has a probability distribution associated with it. We have seen this from our programming exercise in module 2. We extracted the probability distributions of N-block combinations for English, German, French, and Tagalog languages.

- We can let be the binary alphabet where the symbols are . The message could be streams of 1s and 0s that could mean something. For example, a message could be a sequence of events when a light is on or off. It could also be an indicator for sending decimal values in the form of binary digits. Finally, it could represent binary pixels of a fixed-size image.

- In biology, we can let be the DNA bases whose symbols are . is adenine, is cytosine, is guanine, and is thymine. These symbols combine to form DNA sequences that are messages to instruct a cell to do certain types of protein synthesis.

Make sure to understand carefully what source alphabets, symbols, and messages mean.

The Channel

The channel is the medium where the source message travels until it gets to the receiver. In our Bob and Alice example, they used a wireless channel. The channel has a maximum capacity measured as the number of symbols that can be transmitted per second. We call this the channel capacity. We will discuss this later. Most of the time, we associate channels with noise. A noiseless channel is where the information of the message gets to the receiver "perfectly". That means whatever the source sends gets to the receiver without any glitches or any manipulation of the message's symbols. A noisy channel flips existing symbols of a message or adds unnecessary (or new) symbols that are not originally part of the source alphabet. The noise disrupts the message and thereby affecting the information that the receiver retrieves. We associate the chance of flipping symbols as conditional probabilities. For example, suppose we received a 1 at the receiver's end but, on a bird's eye view, the source actually sent a 0. We can model this noise with the probability of receiving a 1 given that a 0 was sent (i.e., ).

Let's take a few examples:

- In the Bob and Alice story, the wireless channel can disrupt Bob's message. Suppose one of the messages Bob sends is "love can be as sweet as smelling the roses". Unfortunately, noise can either corrupt symbols or add unwanted symbols such the the message becomes "love cant bed as sweat as smelting the noses". This is a disaster if it gets to Alice. 😱

- Another example is memory. Suppose you're in Mars and you need to record audio logs and videos on a solid-state drive (SSD). Memories can be corrupted due to cosmic-ray bit flips. These bit flips occur due to cosmic energies that zap some bits of memory. Say some data gets flipped into . Both representations mean totally different things.

- Last example is social media. Good people (source) would like to spread factual news (message) that are noteworthy of broadcasting to ordinary citizens (receivers). Unfortunately, this does not stop evil citizens from creating fake news (noise). Since both factual news and fake news mix together in social media, fake news disrupts the intended messages for ordinary citizens. Don't you hate it when this happens? 😩 Innocent people fall into this trap.

Of course, if the channels were noiseless, the examples above won't have any issues with the received messages. We won't be dealing with the physics of channels in this course.

The Receiver

The receiver, obviously, receives and accepts the message at the other end of the channel. Just like the source, the receiver is a random variable that has outcomes associated to probability distribution. We can also call as the receiver alphabet with symbols . Observe that we purposely set the maximum number as because it is possible that the receiver may receive more symbols than the source alphabet (i.e., ) when the channel is noisy. A noiseless channel produces where all outcomes and the probability distributions . If the channel is noisy then and either the outcomes or the probability distributions are not equal. Take note that it's possible to have the exact same outcomes but different probability distributions. Let's take a look at some examples:

- From the Bob and Alice example, when Bob sent "love can be as sweet as smelling the roses" but Alice received "love cant bed as sweat as smelting the noses" shows that some symbols flip and some symbols magically appear. Here, we can show that but the probability distribution of will be different from . Checkout the tabulated data below. We'll leave it up to you how we got these numbers. It should be obvious.

| Outcomes | ||

|---|---|---|

| a | 0.071 | 0.091 |

| b | 0.024 | 0.023 |

| c | 0.024 | 0.023 |

| d | 0 | 0.023 |

| e | 0.167 | 0.136 |

| g | 0.024 | 0.023 |

| h | 0.024 | 0.023 |

| i | 0.024 | 0.023 |

| l | 0.071 | 0.045 |

| m | 0.024 | 0.023 |

| n | 0.048 | 0.068 |

| o | 0.048 | 0.045 |

| r | 0.024 | 0 |

| s | 0.143 | 0.136 |

| t | 0.048 | 0.091 |

| v | 0.024 | 0.023 |

| w | 0.024 | 0.023 |

| space | 0.190 | 0.180 |

- For binary channels it is possible that the receiver alphabet is the same as the source alphabet where are the symbols. Suppose the source sends a binary image where each pixel is either a 1 or 0 with and . If the channel is noiseless then and and the noise conditional probability . However, if the channel is noisy then the noise probabilities will take effect: resulting in and . We will discuss this later. Deriving these results are part of your theoretical exercise 😁.

- Lastly, the race to select a champion to represent the Philippines will be held this May 2022. Suppose our good citizens (source) have done their part in participating in the elections. Once the ballots are saved, these ballots move to the elections office (channel) for counting. Unfortunately, some evil politician cannot withstand losing. The evil politician bribed (noise) the office to pump up the voting counts for themselves. When the counting finishes, the results turn out to be skewed from what the Filipino nation really chose (receiver). This can be an interesting topic to look into. Can we determine how much noise got into the system?

In summary, the three basic components are the source, channel, and receiver. The source is characterized by some random variable which is also the source alphabet containing the symbols with a probability distribution associated to each outcome. The combinational sequence of symbols creates a message that travels along the channel. The channel is the medium where the message goes through and it is possible that noise can corrupt the message. Some channels can be noiseless where the message will never be corrupted, while some channels can be noisy where some symbols of the source message can be altered or some new symbols can be added to the source message. The receiver retrieves the message at the end of the channel. The receiver is characterized by some random variable also with the receiver alphabet containing the symbols associated to some probability distribution. It is possible for the receiver to receive more symbols than the source (i.e. ). If the channel is noiseless then the receiver will obtain the exact outcomes and distributions ; otherwise, either the outcomes or the probability distributions won't be the same: .

Binary Symmetric Channels

We will focus on binary channels since almost all computer systems use binary representations. One of the most common binary channels is the Binary Symmetric Channel (BSC). Figure 2 shows how we draw BSC trees.

The source alphabet of a BSC is with probability distribution that consists of the probability of sending a 1 as and the probability of sending a 0 as . The blue arrows model the channel probabilities. The probabilities of receiving the incorrect value when a signal is sent are . In other words, we have the probability of receiving a 1 but a 0 is sent or the probability of receiving a 0 but a 1 is sent. Of course, the probability of receiving the correct values when a signal is sent are . Finally, the probability that the received data are and . Let's tabulate these probabilities:

| Component | Probability |

|---|---|

We are interested in calculating , , , , and . For now, we'll be ignoring because we are much more interested about the information that appears at the receiver.

Calculating source entropy

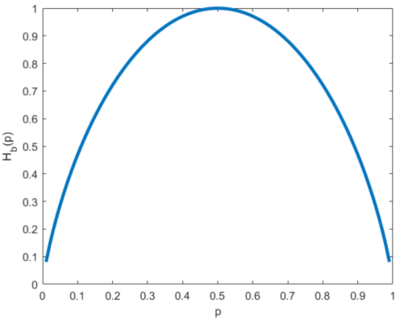

Since the input is a simple binary source, we use the Bernoulli entropy . Therefore:

-

(1)

-

Sometimes we prefer to combine terms so that equation 1 is simplified into:

This reduces terms and provides a more elegant equation; however, equation 1 is much preferred if we are to program it. The simplified version has a problem when creating an undefined (or infinite) value for the fraction. Because of this, we'll prefer to stick with the form of equation 1 so that it's easier to program. For the case when we can always set that in our program to return 0 when this happens. Recall our "fixed" definition for information. One important observation, an obvious one, is that the source entropy is completely independent of noise. Therefore it really is just dependent on the probability distribution of our source alphabet. Figure 3 shows the same Bernoulli entropy curve with varying . We've seen this in our introduction to information theory Wiki page.

Calculating receiver entropy

If you think about it carefully, the receiver side is also a Bernoulli entropy. We know that and . If we let then it's as simple as re-writing the Bernoulli entropy as:

-

(2)

-

Expanding this just for completeness results in:

-

(2)

-

We set equation 2 to be either of the above equations since they're the same. Again, it's simpler use the former version because we can directly program the equation. Simply find and use a Bernoulli entropy function. It's interesting to see what happens when we vary . Figure 4 shows what happens to when we sweep and parametrize simultaneously. Here are a few interesting observations:

- When , (the thick blue line) observe that . This should be expected because when there is no noise, then we should be able to retrieve the same information.

- When , (the green line super imposed on the blue line) shows that too! When it doesn't necessarily mean the noise is at maximum, but rather the noise inverts all 1s to 0s. Essentially, we have the "same" information but all bits are flipped. This is the same as finding the negative form of an image. We have the same information but its representation is inverted.

- When , (the yellow line) results in entropy that is at maximum. Regardless of how many 1s and 0s you send over the channel, the bits will always be flipped 50% of the time. This yields maximum uncertainty and the receiver will never "understand" what it's receiving. For example, suppose the source sends a thousand 1s continuously. But the receiver receives 500 1s and 500 0s. In the receiver's perspective, they wouldn't know what to make of this information.

- When , the entropy trend increases as it gets close and closer to a flat 1. When the entropy trend decreases on the sides and goes back to the same curve when . In the region where , the uncertainty increases due to increasing noise; however, in the region where , the uncertainty starts to go back because even though the bits are flipped, the inversion seems to maintain the same information.

- Lastly, if you look closely at equation 2, if we sweep (such that it's the x-axis) and we parametrize (where it represents the different curves), we get the same trends in figure 4.

Take note of these interpretations for now. We'll demonstrate these with images in a bit.

Calculating noise entropy

By definition of conditional entropy we have:

We can write this out explicitly to obtain:

We already know:

We need to find , , , and . Simple!

Plugging in the equations and simplifying results in:

Lo and behold, the noise entropy is simply the Bernoulli entropy of . Equation 3 shows this and we already know the trend for this.

-

(3)

-

One important observation is that is only dependent on and it is independent of . Any manipulation from the source will never affect the noise.

Calculating mutual information

Recall that we can calculate the mutual information by:

-

(4)

-

Remember, if you forget, draw the Venn diagram or refer to module 2 discussion on mutual information. It is better to program this equation after writing the functions for and instead of re-writing the entire equation. However, if you are a masochist and prefer to write down the entire derivation, it's entirely up to you. If we plug in equations 2 and 3 we get:

-

(4)

-

Or you can solve this using our definition of mutual information as:

You should be able to end up in the same equation. We'll use the former equation 4 instead for our programs due to its simplicity. Aside from getting the average mutual information for the entire system, it is interesting to determine the mutual information on a per outcome basis. We write:

-

(5)

-

Equation 5 measures how much mutual information per pairs of receive and send combinations. This has a nice interpretation where is the mutual information about contained in . Again, recall the Venn diagram. For example, tells us the mutual information about contained in . In a way it really tells us how much of is in and so on.

![{\displaystyle H(S)=p\left[\log _{2}\left({\frac {1-p}{p}}\right)\right]-\log _{2}(1-p)}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/cdeff8ba880c775d28bc664bcd533b7b2cfebed1)

![{\displaystyle I(R,S)=\left[-(p+\epsilon -2\epsilon p)\log _{2}(p+\epsilon -2\epsilon p)-(1-p-\epsilon +2\epsilon p)\log _{2}(1-p-\epsilon +2\epsilon p)\right]-\left[-\epsilon \log _{2}(\epsilon )-(1-\epsilon )\log _{2}(1-\epsilon )\right]}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/9779caed445f5cc7438efaf3c4648e028f60323c)