Information and entropy

Contents

Before We Begin ...

From the last module's introduction, information occurs in everyday life, and it consists of two aspects: surprise and meaning. We would like to emphasize that our focus will be on the mathematics of surprise or uncertainty. Whenever you study a subject, you also experience a subtle application of information theory. For example, you are asked to review your elementary algebra again. You have confidence that the topic is easy, and you only need very little "brainpower" for the subject. It looks and feels easy because you are familiar with the material. You already have the information for the topic. Suppose you were asked to review your calculus subjects (e.g., Math 20 series) you may find it more challenging because most theories may or may not be familiar to you. There is a higher degree of uncertainty. This time you need to exert more effort in studying. If you were asked to take on a new theory that you have no clue about, you have maximum uncertainty about that theory. However, once you have given enough time and effort to study that theory, that uncertainty now becomes acquired information for you. You may not need too much brainpower to review or teach that topic again. This leads to an important concept that may repeat in future discussions. We experience an uncertainty about a topic that we don't know about. However, when we "receive" that uncertainty, it becomes information.

There is a subtle tradeoff between uncertainty and brainpower for a particular subject. You will start to notice this later in the course. Observe that when there is high uncertainty (e.g., a completely new topic), our brain exerts effort to study a material. Whenever we have low uncertainty (e.g., review a familiar subject), we exert less effort for the subject. The amount of brainpower that we use can be analogous to computing power. The uncertainty can be associated with the data that we need to process. This example shows where information theory and complexity mix together. If we are given a similar problem, what would the best solution be? Information theory does not tell us how to solve a problem because it is only a measurement. The solutions are up to us. Going back to our study example, if we need to study a completely new topic, what are our options? Do we spend so much time on the material to cover the bulk of it? How much brainpower do we use? Or can we cut the material into chunks so that we can process it with optimum time and power? The solution is up to us. We just need to be creative.

Deriving Information

Shannon has a very nice comprehensive introduction on how he formulated his theory [2]. Let's try to summarize his approach in a different way [3]. Remember, our mathematical definition of information is the measurement of surprise. Let's say we have an experiment with two independent events and . Shannon pointed out important properties of information:

- should be a decreasing function of . The same goes for event .

- If the two events have then it should follow that . Again, following that the more surprising event should have higher information.

- Since both events are independent, then . Also, following from the previous item: .

Let's look at a simple example. Suppose we'll be drawing a card from a pack of 52 casino cards. Suppose we have we get the probabilities where the drawn card is:

- A club. Let this be event .

- An ace. Let this be event .

- An ace of clubs. Let this be event .

The equivalent probabilities would be:

Since we know the probabilities and the desired properties for Shannon's theorem, we can observe the following. because it is more surprising to draw an ace compared to drawing any card that is a club. because it is a lot more surprising to get the ace of clubs compared to the individual events. Moreover, our intuition tells us that . The question is, what kind of function should information be if we know the probabilities of each event? After hours of thinking, Shannon came up with:

-

(1)

-

Which can also be re-written as the equation below because of the law of logarithms .

-

(1)

-

So either way works and we'll call them equation 1. Let's apply these in action, if we calculate the information for events , , and .

It satisfies everything we agreed upon!

It's simple and it agrees well. There's a special case say for some event . This leads to . This breaks those assumptions that Shannon made. Because of this we'll have to make an exemption where then . So equation 1 is more appropriately written as:

-

(1)

-

Bits, Bans, and Nats

You might wonder why we used base 2 for the log function. This is just for convenience because using the equation in base 2 suits well with our binary computations. The units of information if taken in base 2 are in bits. If taken in base 3 we call them trits. If taken in base 10 we call them bans, and if taken in base , we call them nats. The table below shows this comparison.

| base | units | |

|---|---|---|

| 2 | bits (from binary) | 2.00 |

| 3 | trits (from trinary) | 1.26 |

| nats (from natural logarithm) | 1.38 | |

| 10 | bans | 0.602 |

Information Examples

In summary, information can be thought of as the amount of surprise at seeing an event. Note that a highly probable outcome is not surprising. Consider the following events:

| Event | Probability | Information (Surprise) |

|---|---|---|

| Someone tells you . | ||

| You got the wrong answer on a 4-choice multiple choice question. | ||

| You guessed correctly on a 4-choice multiple choice question. | ||

| You got the correct answer in a True or False question. | ||

| You rolled a seven on rolling a pair of dice. | ||

| Winning the Ultra Lotto 6/58 jackpot. |

Entropy

Information is a measure of surprise for one event only. We are also interested in a collection of events encapsulated with some random variable . Recall that a random variable contains a set of outcomes and each outcome has an associated probability . Each outcome also has its own set of information . We can get the mean of all . We call this entropy which we denote with :

-

(2)

-

Entropy is literally just the mean of information for some random variable . Let's look at a few examples. Consider some random variable with outcomes . All outcomes have a probability of . What is ? Simple!

Therefore, the average information for the simple uniform distribution is bits. Let's take a look at another example. Suppose we flip a fair coin three times. Let the random variable be the sum of heads in those three flips. What is ? It's easier to tabulate the data.

Summing all terms we get:

Bounds of Entropy

Entropy has bounds, meaning there is a lower and upper limit to this value. Just like in any system, these bounds serve as limitations to our measurement. In a nutshell, for any random variable with outcomes, the bounds of entropy is:

-

(3)

-

The lower bound occurs if and only if one of the outcomes has absolute certainty (i.e., ). This is trivial. Recall that for a random variable with outcomes and their associated probabilities . The sum of all probabilities must sum up to 1. Such that:

If one of the elements has absolute certainty: then that means all other probabilities need to be where . Solving for if this happens.

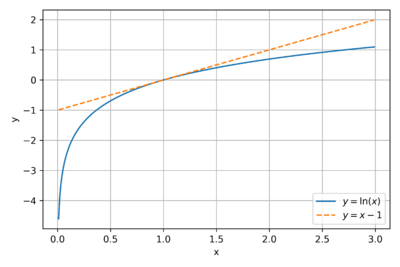

The upper bound is a bit tricky. First we need to recognize a fact that with equality if and only if . When we say "with equality" then that means the equal sign holds true if and only if the condition is met. This is trivial: if and only if . We can also observe and from figure 1.

Second, we need to appreciate what Gibbs inequality tells us. Suppose we have two probability distributions and . Also note that and . Gibbs inequality says:

-

(4)

-

Simplifying the right handside results in:

In other words, Gibbs inequality says that:

-

(5)

-

If and only if for all . Take note of equation 5 and its condition for being true. We will use this result for deriving the upper bound. Now, let's consider a random variable with probability distribution . Let's find what kind of distribution maximizes the entropy function. We have:

The second step works because we know that . The last step works because of Gibbs inequality (i.e., ). Therefore works if and only if for all .

In plain English, this means that we attain maximum entropy if and only if all outcomes are equiprobable!

In summary, the bounds of entropy can be summarized as .

- The lower bound occurs if at least one outcome has absolute certainty .

- The upper bound occurs if all outcomes are equiprobable . Assuming there are outcomes.

Interpreting Entropy

Examples

It's Your Urn Again

Dicey

Odd Ball Problem

References

- ↑ Sousa, David A. 2006. How the Brain Learns. Thousand Oaks, Calif: Corwin Press.

- ↑ Shannon, C. E., & Weaver, W., The mathematical theory of communication. Urbana: University of Illinois Press. 1949.

- ↑ Applebaum, D. , Probability and Information: An Integrated Approach, Cambridge University Press, 2008.