Difference between revisions of "Joint entropy, conditional entropy, and mutual information"

Ryan Antonio (talk | contribs) |

Ryan Antonio (talk | contribs) |

||

| (13 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

== Joint Entropies == | == Joint Entropies == | ||

| − | In this module, we'll discuss several extensions of entropy. Let's begin with joint entropy. Suppose we have a random variable <math> X </math> with elements <math> \{x_1, x_2,..., x_i, ... , x_n \} </math> and random variable <math> Y </math> with elements <math> \{y_1, y_2, ..., y_j, ..., y_m \}</math>. We define the joint entropy of <math> | + | In this module, we'll discuss several extensions of entropy. Let's begin with joint entropy. Suppose we have a random variable <math> X </math> with elements <math> \{x_1, x_2,..., x_i, ... , x_n \} </math> and random variable <math> Y </math> with elements <math> \{y_1, y_2, ..., y_j, ..., y_m \}</math>. We define the joint entropy of <math> X </math> and <math> Y </math> as: |

{{NumBlk|::|<math> H(X,Y) = -\sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 \left(P(x_i, y_j) \right) </math>|{{EquationRef|1}}}} | {{NumBlk|::|<math> H(X,Y) = -\sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 \left(P(x_i, y_j) \right) </math>|{{EquationRef|1}}}} | ||

| Line 9: | Line 9: | ||

== Conditional Entropies == | == Conditional Entropies == | ||

| − | There are two steps to understand conditional entropies. The first is the uncertainty of a random variable caused by a single outcome only. Suppose we have the same random variables <math> X </math> and <math> Y </math> defined earlier in joint entropies. Let's denote <math> P(y_j|x_i) </math> as the conditional probability of <math> y_j </math> when event <math> x_i </math> happened. We define the entropy <math> H(Y | x_i) </math> as the entropy of the random variable <math> Y </math> given a <math> x_i </math> happened. Take note that we're only interested in the entropy of <math> Y </math> when only the outcome <math> x_i </math> occurred. Mathematically this is: | + | There are two steps to understand conditional entropies. The first is the uncertainty of a random variable caused by a single outcome only. Suppose we have the same random variables <math> X </math> and <math> Y </math> defined earlier in joint entropies. Let's denote <math> P(y_j|x_i) </math> as the conditional probability of <math> y_j </math> when event <math> x_i </math> happened. We define the entropy <math> H(Y | x_i) </math> as the entropy of the random variable <math> Y </math> given a <math> x_i </math> happened. In other words, this is the average information of all the outcomes of <math> Y </math> when event <math> x_i </math> happens. Take note that we're only interested in the entropy of <math> Y </math> when only the outcome <math> x_i </math> occurred. Mathematically this is: |

{{NumBlk|::|<math> H(Y|x_i) = -\sum_{j=1}^m P(y_j|x_i) \log_2 \left(P(y_j| x_i) \right) </math>|{{EquationRef|2}}}} | {{NumBlk|::|<math> H(Y|x_i) = -\sum_{j=1}^m P(y_j|x_i) \log_2 \left(P(y_j| x_i) \right) </math>|{{EquationRef|2}}}} | ||

| − | Just to repeat because it may be confusing, equation 2 just pertains to the uncertainty when only a ''single event'' happened. We can extend this to the total entropy <math> Y </math> when ''any'' of the outcomes in <math> X </math> happens. If we treat <math> H(Y|\cdot) </math> contains a range of <math> \{H(Y|x_1), H(Y|x_2), H(Y|x_3),...,H(Y|x_n) \} </math> and the probability distribution for each associated <math> H(Y|x_i) </math> is <math> \{P(x_1), P(x_2), P(x_3), ... P(x_n) \} </math> so that <math> H(Y|\cdot) </math> is a function of <math> X </math>. Then we define <math> H(Y|X) </math> as the conditional probability of <math> Y </math> given <math> X </math> as: | + | Just to repeat because it may be confusing, equation 2 just pertains to the uncertainty when only a ''single event'' happened. We can extend this to the total entropy <math> Y </math> when ''any'' of the outcomes in <math> X </math> happens. If we treat <math> H(Y|\cdot) </math> like a random variable that contains a range of <math> \{H(Y|x_1), H(Y|x_2), H(Y|x_3),...,H(Y|x_n) \} </math> and the probability distribution for each associated <math> H(Y|x_i) </math> is <math> \{P(x_1), P(x_2), P(x_3), ... P(x_n) \} </math> so that <math> H(Y|\cdot) </math> is a function of <math> X </math>. Then we define <math> H(Y|X) </math> as the conditional probability of <math> Y </math> given <math> X </math> as: |

| − | {{NumBlk|::|<math> H(Y|X) = E(H(Y|\cdot)) = | + | {{NumBlk|::|<math> H(Y|X) = E(H(Y|\cdot)) = \sum_{i=1}^n P(x_i) H(Y|x_i) </math>|{{EquationRef|3}}}} |

Substituting equation 2 to equation 3 and knowing that <math> P(y_j|x_i)P(x_i) = P(x_i,y_j) </math>. We can re-write this as: | Substituting equation 2 to equation 3 and knowing that <math> P(y_j|x_i)P(x_i) = P(x_i,y_j) </math>. We can re-write this as: | ||

| Line 80: | Line 80: | ||

\begin{align} | \begin{align} | ||

H(Y|X) &= -\sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 (P(y_j|x_i)) \\ | H(Y|X) &= -\sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 (P(y_j|x_i)) \\ | ||

| − | &= -P(a | + | &= -P(w,a) \log_2(P(w|a)) -P(x,a) \log_2(P(x|a)) -P(y,b) \log_2(P(y|b)) -P(z,b) \log_2(P(z|b)) \\ |

&= -\frac{1}{3} \log_2 \left(\frac{2}{3}\right) -\frac{1}{6} \log_2 \left(\frac{1}{3}\right) -\frac{1}{4} \log_2 \left(\frac{1}{2}\right) -\frac{1}{4} \log_2 \left(\frac{1}{2}\right) \\ | &= -\frac{1}{3} \log_2 \left(\frac{2}{3}\right) -\frac{1}{6} \log_2 \left(\frac{1}{3}\right) -\frac{1}{4} \log_2 \left(\frac{1}{2}\right) -\frac{1}{4} \log_2 \left(\frac{1}{2}\right) \\ | ||

&= 0.959 \ \textrm{bits} | &= 0.959 \ \textrm{bits} | ||

| Line 110: | Line 110: | ||

{{NumBlk|::|<math> H(Y,X) = H(Y) + H(X|Y) </math>|{{EquationRef|5}}}} | {{NumBlk|::|<math> H(Y,X) = H(Y) + H(X|Y) </math>|{{EquationRef|5}}}} | ||

| − | If <math> X </math> and <math> Y </math> are independent | + | If <math> X </math> and <math> Y </math> are independent then: |

{{NumBlk|::|<math> H(X,Y) = H(X) + H(Y) </math>|{{EquationRef|6}}}} | {{NumBlk|::|<math> H(X,Y) = H(X) + H(Y) </math>|{{EquationRef|6}}}} | ||

| Line 120: | Line 120: | ||

With the final discussion on the properties of joint and conditional entropies, we also define ''mutual information'' as the information content of <math> Y </math> contained within <math> X </math> written as: | With the final discussion on the properties of joint and conditional entropies, we also define ''mutual information'' as the information content of <math> Y </math> contained within <math> X </math> written as: | ||

| − | {{NumBlk|::|<math> I(X,Y) = H(Y) - H(Y|X) </math>|{{EquationRef| | + | {{NumBlk|::|<math> I(X,Y) = H(Y) - H(Y|X) </math>|{{EquationRef|7}}}} |

Or: | Or: | ||

| − | {{NumBlk|::|<math> I(X,Y) = H(X) - H(X|Y) </math>|{{EquationRef| | + | {{NumBlk|::|<math> I(X,Y) = H(X) - H(X|Y) </math>|{{EquationRef|7}}}} |

If we plug in the definitions of entropy and conditional entropy, mutual information in expanded form is: | If we plug in the definitions of entropy and conditional entropy, mutual information in expanded form is: | ||

| − | {{NumBlk|::|<math> I(X,Y) = \sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 \left(\frac{P(x_i,y_j)}{P(x_i)P(y_j)} \right) </math>|{{EquationRef| | + | {{NumBlk|::|<math> I(X,Y) = \sum_{i=1}^n \sum_{j=1}^m P(x_i,y_j) \log_2 \left(\frac{P(x_i,y_j)}{P(x_i)P(y_j)} \right) </math>|{{EquationRef|8}}}} |

It also follows that: | It also follows that: | ||

* <math> I(X,Y) = I(Y,X) </math>. This is trivial from equation 7. | * <math> I(X,Y) = I(Y,X) </math>. This is trivial from equation 7. | ||

| − | * If <math> X </math> and <math> Y </math> are independent, then <math> I(X,Y) = 0 </math>. This is also trivial from equation | + | * If <math> X </math> and <math> Y </math> are independent, then <math> I(X,Y) = 0 </math>. This is also trivial from equation 8. |

| − | As a short example, we can use the binary tree problem again. Let's compute <math> I(X,Y) </math>. We simply need to use equation | + | As a short example, we can use the binary tree problem again. Let's compute <math> I(X,Y) </math>. We simply need to use equation 7: <math> I(X,Y) = H(Y) - H(Y|X) </math>. We already know <math> H(Y|X) = 0.959 </math> bits. <math> H(Y) </math> is computed by first knowing <math>\{P(w), P(x), P(y), P(z) \} </math>. Recall that: |

<math> P(y_j) = \sum_{i=1}^n P(y_j|x_i)P(x_i) </math> | <math> P(y_j) = \sum_{i=1}^n P(y_j|x_i)P(x_i) </math> | ||

| − | But then, for each outcome in <math> Y </math> has the same probability of the joint entropy. For example, event <math> w </math> only occurs if event <math> a </math> occurred first. Event <math> | + | But then, for each outcome in <math> Y </math> has the same probability of the joint entropy. For example, event <math> w </math> only occurs if event <math> a </math> occurred first. Event <math> y </math> occurs only if event <math> b </math> occurred first. So it means that: |

* <math> P(w) = P(w|a)P(a) = P(w,a) = \frac{1}{3} </math> | * <math> P(w) = P(w|a)P(a) = P(w,a) = \frac{1}{3} </math> | ||

| Line 148: | Line 148: | ||

Therefore, <math> H(Y) = H(X,Y) = 1.959 </math> bits. Therefore, the information <math> I(X,Y) = H(Y) - H(Y|X) = 1.959 - 0.959 = 1.000 </math> bits. | Therefore, <math> H(Y) = H(X,Y) = 1.959 </math> bits. Therefore, the information <math> I(X,Y) = H(Y) - H(Y|X) = 1.959 - 0.959 = 1.000 </math> bits. | ||

| + | == Chain Rule for Conditional Entropy == | ||

| − | + | What happens when we deal with more than two random variables? To facilitate the discussion, let us recall the chain rule for joint distributions. | |

| − | + | Let <math>X_1, X_2, ..., X_n</math> be a sequence of discrete random variables. Then, their joint distribution can be factored as follows | |

| − | <math> </math> | + | <math>p(X_1, X_2, ... X_n) = p(X_1) p(X_2|X_1) p(X_3|X_1,X_2) ... p(X_n|X_1, X_2, ... X_{n-1})</math>, |

| + | |||

| + | === Chain rule for entropy === | ||

| + | The chain rule for (joint) entropy is very similar to the above expansion, but we use additions instead of multiplications. | ||

| + | |||

| + | {{NumBlk|::|<math>H(X_1, X_2, ..., X_n) = H(X_1) + H(X_2|X_1) + H(X_3|X_1,X_2) + ... + H(X_n | X_1, X_2, ..., X_{n-1}) </math>|{{EquationRef|9}}}} | ||

| + | |||

| + | |||

| + | Although we do not supply a complete proof here, this fact should not be too surprising since entropy operates on logarithms of probabilities and logarithms of product terms expand to sums of logarithms. | ||

| + | |||

| + | === Proof of chain rule for <math>n=3</math> === | ||

| + | |||

| + | Let us see that the statement is true for <math>n=3</math>. Let <math>X_1,X_2,X_3</math> be three discrete random variables. The idea with the proof is that we operate on two random variables at a time, since prior to the chain rule we only know that <math>H(X,Y) = H(X) + X(Y|X)</math>. We can write <math>H(X_1, X_2,X_3) = H((X_1, X_2),X_3)</math>, where we bundle <math>X_1</math> and <math>X_2</math> into one random variable <math>(X_1, X_2)</math>. | ||

| + | |||

| + | The proof now proceeds as follows: | ||

| + | |||

| + | <math> H(X_1, X_2, X_3) = H((X_1, X_2),X_3 ) = H(X_1, X_2) + H(X_3 | X_1, X_2) = [H(X_1) + H(X_2 | X_1)] + H(X_3 | X_1, X_2)</math> | ||

| + | |||

| + | One interpretation of the chain rule shown is that to obtain the total information (joint entropy) of <math>X_1,X_2,X_3</math> as a whole, we can | ||

| + | * obtain information about <math>X_1</math> first without any prior knowledge: <math>H(X_1)</math>, then | ||

| + | * obtain information about <math>X_2</math> with knowledge of <math>X_1</math>: <math>H(X_2|X_1)</math>, then | ||

| + | * obtain information about <math>X_3</math> with knowledge of <math>X_1</math> and <math>X_2</math>: <math>H(X_3 | X_1, X_2)</math>. | ||

| + | |||

| + | For <math>n>3</math>, we can proceed by induction. Below is the sketch of the proof: | ||

| + | * (Base step) For a fixed <math> n=k </math>, assume that ''any'' collection of <math>k</math> random variables satisfies the chain rule. | ||

| + | * (Induction step) When <math> n = k+1 </math>, write the joint entropy of <math>k+1</math> random variables as follows: <math>H(X_1, ..., X_k, X_{k+1}) = H( (X_1, ..., X_k), X_{k+1})</math>, where the first <math>k</math> are bundled together into one random variable. | ||

| + | * Use the two-variable chain rule <math>H(X,Y) = H(X) + H(Y|X)</math> and the base step to show that <math>H(X_1, ..., X_k, X_{k+1}) = H(X_1) + H(X_2 | X_1) + ... + H(X_{k+1}|X_1, ..., X_k)</math>. | ||

| + | |||

| + | |||

| + | A few things to note: | ||

| + | * The order of "obtaining information" is irrelevant in calculating the joint entropy of multiple rvs. Write the chain rule if we proceed by obtaining information in the following order: <math>X_2, X_3, X_1</math>. | ||

| + | * The chain we have now works for ''any'' collection of random variables. Can you figure out how to simplify the chain rule for Markov chains? (The answer will be discussed in Module 3.) | ||

| + | |||

| + | == Graphical Summary == | ||

| + | |||

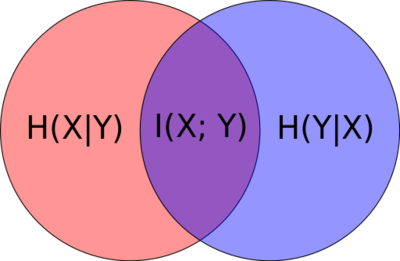

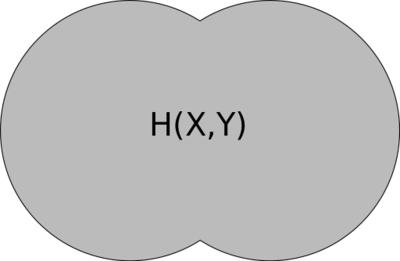

| + | Figure 2 shows how we can visualize conditional entropy and mutual information. The red and blue pertain to the individual entropies <math> H(X) </math> and <math> H(Y) </math>, respectively. Figure 3 shows what joint entropy looks like. | ||

| + | |||

| + | [[File: Venn diagram.svg|400px|thumb|center|Figure 2: Venn diagram visualizing conditional entropy and mutual information.]] | ||

| + | [[File: Venn diagram joint entropy.svg|400px|thumb|center|Figure 3: Venn diagram visualizing joint entropy.]] | ||

| + | |||

| + | From the diagrams you should be able to recall the entropy relationships on the fly. We can summarize (and derive new relationships) as: | ||

| + | |||

| + | * <math> I(X,Y) = H(X) - H(X|Y) = H(Y) - H(Y|X) </math> | ||

| + | * <math> H(X,Y) = H(X|Y) + H(Y|X) + I(X,Y) = H(X) + H(Y) - I(X,Y) </math> | ||

| + | |||

| + | {{Note| From the diagrams, it's easy to re-translate <math> H(Y|X) </math> (or <math> H(X|Y) </math>) as '''the uncertainty of <math> Y </math> without the <math> X </math> happening''' |reminder}} | ||

| + | |||

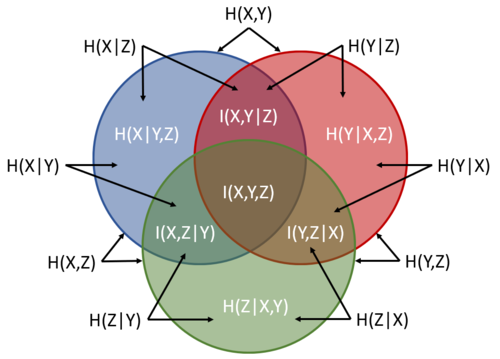

| + | Of course we, can extend this concept to three variables. For example, figure 4 shows a Venn diagram for three sets. The entire blue circle is <math> H(X) </math>, the entire red is <math> H(Y) </math>, and the entire green is <math> H(Z) </math>. The labels are there to guide you. | ||

| + | |||

| + | [[File:Entropy three sets.PNG|500px|thumb|center| Figure 4: Entropy Venn diagram from three sets. ]] | ||

| + | |||

| + | We can also derive useful relationships based on figure 4. Some of these include: | ||

| + | |||

| + | * <math>H(X,Y,Z) = H(X) + H(Y|X) + H(Z|X,Y) </math> which is consistent with equation 9. Look at the diagram carefully. | ||

| + | * <math> H(X,Y,Z) = H(X) + H(Y) + H(Z) - I(X,Y) - I(Y,Z) - I(X,Z) + I(X,Y,Z) </math> while noting that <math> I(X,Y) = H(X,Y) - H(X|Y) - H(Y|X) </math>, <math> I(X,Z) = H(X,Z) - H(X|Z) - H(Z|X) </math>, and <math> I(Y,Z) = H(Y,Z) - H(Z|Y) - H(Y|Z) </math>. | ||

| + | * <math> I(X,Y,Z) = H(X,Y,Z) - H(X) - H(Y) - H(Z) + I(X,Y) + I(Y,Z) + I(X,Z) </math> as a consequence of the previous equation. | ||

| + | * <math> I(X,Y|Z) = H(X,Y,Z) - H(Z) - H(X|Y,Z) - H(Y|X,Z) </math>. All similar combinations (e.g., <math> I(X,Z|Y) </math> and <math> I(Y,Z|X) </math> ) should be the same too. | ||

Latest revision as of 11:00, 4 March 2022

Contents

Joint Entropies

In this module, we'll discuss several extensions of entropy. Let's begin with joint entropy. Suppose we have a random variable with elements and random variable with elements . We define the joint entropy of and as:

-

(1)

-

Take note that . This is trivial. From our previous discussion about the decision trees of a game, we used joint entropies to calculate the overall entropy.

Conditional Entropies

There are two steps to understand conditional entropies. The first is the uncertainty of a random variable caused by a single outcome only. Suppose we have the same random variables and defined earlier in joint entropies. Let's denote as the conditional probability of when event happened. We define the entropy as the entropy of the random variable given a happened. In other words, this is the average information of all the outcomes of when event happens. Take note that we're only interested in the entropy of when only the outcome occurred. Mathematically this is:

-

(2)

-

Just to repeat because it may be confusing, equation 2 just pertains to the uncertainty when only a single event happened. We can extend this to the total entropy when any of the outcomes in happens. If we treat like a random variable that contains a range of and the probability distribution for each associated is so that is a function of . Then we define as the conditional probability of given as:

-

(3)

-

Substituting equation 2 to equation 3 and knowing that . We can re-write this as:

-

(3)

-

Note that it is trivial to prove that if and are independent. We'll leave it up to you to prove this. A few hints include: and if and are independent.

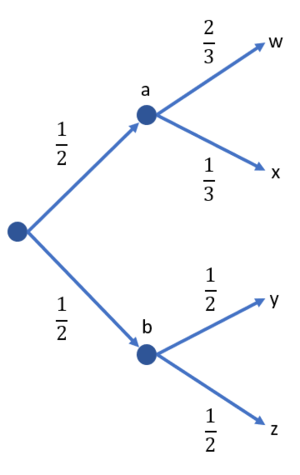

Binary Tree Example

Let's apply the first two concepts in a simple binary tree example. Suppose we let be a random variable with outcomes and probabilities . Let be the random variable with outcomes . We are also given the probabilities , , , and . Figure 1 shows the binary tree. Calculate:

(a)

(b)

(c)

(d)

Solution

(a) Use equation 2 to solve

(b) Same as in (a), use equation 2 to solve

(c) Can be solved in two ways. First is to use equation 3.

Or, we can solve it using the alternative version of equation 3. But we also need to know the joint probabilities:

(d) We already listed the joint probabilities in (c). We simply use equation 1 for this:

Important Note!

This example actually shows us an important observation:

-

(4)

-

We can easily observe that this holds true for the binary tree example. This has an important interpretation: The combined uncertainty in and (i.e., ) is the sum of that uncertainty which is totally due to (i.e., ), and that which is still due to once has been accounted for (i.e., ).

Since it also follows that:

-

(5)

-

If and are independent then:

-

(6)

-

An alternative interpretation to is the information content of which is NOT contained in .

Mutual Information

With the final discussion on the properties of joint and conditional entropies, we also define mutual information as the information content of contained within written as:

-

(7)

-

Or:

-

(7)

-

If we plug in the definitions of entropy and conditional entropy, mutual information in expanded form is:

-

(8)

-

It also follows that:

- . This is trivial from equation 7.

- If and are independent, then . This is also trivial from equation 8.

As a short example, we can use the binary tree problem again. Let's compute . We simply need to use equation 7: . We already know bits. is computed by first knowing . Recall that:

But then, for each outcome in has the same probability of the joint entropy. For example, event only occurs if event occurred first. Event occurs only if event occurred first. So it means that:

Therefore, bits. Therefore, the information bits.

Chain Rule for Conditional Entropy

What happens when we deal with more than two random variables? To facilitate the discussion, let us recall the chain rule for joint distributions.

Let be a sequence of discrete random variables. Then, their joint distribution can be factored as follows

,

Chain rule for entropy

The chain rule for (joint) entropy is very similar to the above expansion, but we use additions instead of multiplications.

-

(9)

-

Although we do not supply a complete proof here, this fact should not be too surprising since entropy operates on logarithms of probabilities and logarithms of product terms expand to sums of logarithms.

Proof of chain rule for

Let us see that the statement is true for . Let be three discrete random variables. The idea with the proof is that we operate on two random variables at a time, since prior to the chain rule we only know that . We can write , where we bundle and into one random variable .

The proof now proceeds as follows:

One interpretation of the chain rule shown is that to obtain the total information (joint entropy) of as a whole, we can

- obtain information about first without any prior knowledge: , then

- obtain information about with knowledge of : , then

- obtain information about with knowledge of and : .

For , we can proceed by induction. Below is the sketch of the proof:

- (Base step) For a fixed , assume that any collection of random variables satisfies the chain rule.

- (Induction step) When , write the joint entropy of random variables as follows: , where the first are bundled together into one random variable.

- Use the two-variable chain rule and the base step to show that .

A few things to note:

- The order of "obtaining information" is irrelevant in calculating the joint entropy of multiple rvs. Write the chain rule if we proceed by obtaining information in the following order: .

- The chain we have now works for any collection of random variables. Can you figure out how to simplify the chain rule for Markov chains? (The answer will be discussed in Module 3.)

Graphical Summary

Figure 2 shows how we can visualize conditional entropy and mutual information. The red and blue pertain to the individual entropies and , respectively. Figure 3 shows what joint entropy looks like.

From the diagrams you should be able to recall the entropy relationships on the fly. We can summarize (and derive new relationships) as:

Of course we, can extend this concept to three variables. For example, figure 4 shows a Venn diagram for three sets. The entire blue circle is , the entire red is , and the entire green is . The labels are there to guide you.

We can also derive useful relationships based on figure 4. Some of these include:

- which is consistent with equation 9. Look at the diagram carefully.

- while noting that , , and .

- as a consequence of the previous equation.

- . All similar combinations (e.g., and ) should be the same too.

![{\displaystyle H(X_{1},X_{2},X_{3})=H((X_{1},X_{2}),X_{3})=H(X_{1},X_{2})+H(X_{3}|X_{1},X_{2})=[H(X_{1})+H(X_{2}|X_{1})]+H(X_{3}|X_{1},X_{2})}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/1e814d88da43a98bed63adf8f11bc71e815d00c0)