Difference between revisions of "Coding teaser: source coding"

Ryan Antonio (talk | contribs) |

Ryan Antonio (talk | contribs) |

||

| Line 127: | Line 127: | ||

=== Channel Rate Example 2: A 6-sided Die=== | === Channel Rate Example 2: A 6-sided Die=== | ||

| − | This time, suppose we want to send the symbols of a fair 6-sided die on a channel that only has <math> C = 1 </math> bits/s. Such that <math> S </math> has outcomes <math> \{1,2,3,4,5,6 \}. Of course, all outcomes are equiprobable with <math> P(s_i) = \frac{1}{6} </math>. From here, we can determined <math> H(S) = \log_2(6) = 2.58 </math> bits/symbol. Therefore: | + | This time, suppose we want to send the symbols of a fair 6-sided die on a channel that only has <math> C = 1 </math> bits/s. Such that <math> S </math> has outcomes <math> \{1,2,3,4,5,6 \} </math>. Of course, all outcomes are equiprobable with <math> P(s_i) = \frac{1}{6} </math>. From here, we can determined <math> H(S) = \log_2(6) = 2.58 </math> bits/symbol. Therefore: |

<math> R_{\textrm{max}} = \frac{1 \ \textrm{bits/s}}{ 2.58 \ \textrm{bits/symbols}} = 0.387 \ \textrm{symbols/s} </math> | <math> R_{\textrm{max}} = \frac{1 \ \textrm{bits/s}}{ 2.58 \ \textrm{bits/symbols}} = 0.387 \ \textrm{symbols/s} </math> | ||

| Line 162: | Line 162: | ||

<math> \eta = \frac{H(S)}{L(X)} = \frac{2.58}{3} = 0.86 </math> | <math> \eta = \frac{H(S)}{L(X)} = \frac{2.58}{3} = 0.86 </math> | ||

| − | This is not that efficient but it will suffice for now. | + | This is not that efficient but it will suffice for now. Here, let's introduce the effective symbol rate <math> R_X </math> due to the new encoding: |

| + | |||

| + | {{NumBlk|::|<math> R_{X} = \frac{\eta C}{H(S)} </math>|{{EquationRef|4}}}} | ||

| + | Our encoding scheme affects how much data we're putting into the channel. If our coding efficiency isn't optimal <math> \eta \neq 1</math> then we are only utilizing a fraction of the maximum capacity <math> C</math>. Hence we needed the effective channel transmission rate <math> \eta C </math> incorporated into our effective symbol rate <math> R_X </math>. Calculating <math> R_X </math> for the encoding in codebook-3 would give: | ||

| + | |||

| + | <math> R_X = \frac{0.86 \cdot 1}{2.58} = 0.333</math> symbols/s. | ||

| + | |||

| + | Observe that <math> R_X = 0.333 < R_{\textrm{max}} = 0.387 </math>. We are not even close to the maximum rate. Can we find a better encoding? How about this, suppose we create a composite symbol that consists of the three 6-sided die symbols. For example, we can let <math> 111 </math> be one symbol, or we can let <math> 555 </math> be another symbol. If we do so, then we have around <math> 6\times6\times6 = 216 </math> unique possible outcomes for the new set of symbols. To represent 216 outcomes we need a minimum of 8 bits of codeword length (because <math> 2^8 = 256 </math>). So we can have codebook-4 as: | ||

| + | |||

| + | {| class="wikitable" | ||

| + | |- | ||

| + | ! scope="col"| Source Symbol | ||

| + | ! scope="col"| Codeword | ||

| + | |- | ||

| + | | style="text-align:center;" | 111 | ||

| + | | style="text-align:center;" | 0000_0000 | ||

| + | |- | ||

| + | | style="text-align:center;" | 112 | ||

| + | | style="text-align:center;" | 0000_0001 | ||

| + | |- | ||

| + | | style="text-align:center;" | 113 | ||

| + | | style="text-align:center;" | 0000_0010 | ||

| + | |- | ||

| + | | style="text-align:center;" | ... | ||

| + | | style="text-align:center;" | ... | ||

| + | |- | ||

| + | | style="text-align:center;" | 665 | ||

| + | | style="text-align:center;" | 1101_0110 | ||

| + | |- | ||

| + | | style="text-align:center;" | 666 | ||

| + | | style="text-align:center;" | 1101_0111 | ||

| + | |- | ||

| + | |} | ||

| + | The underscores are just there to separate the 4 bits for clarity. Codebook-4 looks really long but in practice, that's still small. So what changes? Since we have 8 bits per 3 symbols, we effectively have <math> L(X) = \frac{8}{3} </math>. Take note that all outcomes are equiprobable so dividing the 8 bits by 3 symbols is enough. This results in a coding efficiency of: | ||

| − | <math> </math> | + | <math> \eta = \frac{H(S)}{L(X)} = \frac{2.58}{2.66} = 0.97 </math> |

| − | + | Cool! We're much closer to being maximally efficient. Now, let's compute the new <math> R_X </math> for codebook-4: | |

| − | + | <math> R_X = \frac{\eta C}{H(S)} = \frac{0.97}{2.58} = 0.376 </math> symbols/s. | |

| + | |||

| + | Superb! The new <math> R_X = 0.376 </math> is close to the maximum rate of <math> R_{\textrm{max}} = 0.387 </math>. The key trick was to create a composite symbols that consists of 3 of the original source symbols, then represent them with 8 binary digits of codewords. | ||

| + | |||

| + | {{Note| In all of the discussions, we've only used different measures of information and channel rates. None of the discussions talked about a methodology of finding the best encoding scheme. This is because information theory does not tell us how to find the best encoder. It's just a measure. Keep that in mind! |reminder}} | ||

| + | |||

| + | ===Maximum Channel Capacity=== | ||

| + | |||

| + | [[File:Bsc irs sweep.PNG|400px|thumb|right|Figure 2: <math> I(R,S) </math> of a BSC with varying <math> p </math> and parametrizing <math> \epsilon </math>.]] | ||

| + | |||

| + | This time, let's look into how we can quantitatively determine the maximum channel capacity. In the last module, we comprehensively investigated the measures of <math> H(R) </math>, <math>H(R|S) </math>, and <math> I(R,S) </math> on a BSC. Recall that: | ||

| Line 176: | Line 219: | ||

| − | If you think about it, <math> I(R,S) </math> is a measure of channel quality. It tells us how much we can estimate the input from an observed output. In English, we said that <math> I(R,S) </math> is a measure of the received information contained in the source information. Ideally if there is no noise, then <math> H(R|S) = 0 </math> resulting in <math> I(R,S) = H(S) = H(R) </math>. We want this because this means all the information from the source gets to the receiver! When noise is present <math> H(R|S) \neq 0 </math>, it degrades the quality of our <math> I(R,S) </math> and the information about the receiver contained in the source is now being shared with noise. This only means one thing: ''' we want <math> I(R,S) </math> to be at maximum'''. From the previous discussion, we presented how <math> I(R,S) </math> varies with <math> p </math> and parametrizing the noise probability <math> \epsilon </math>. Figure | + | If you think about it, <math> I(R,S) </math> is a measure of channel quality. It tells us how much we can estimate the input from an observed output. In English, we said that <math> I(R,S) </math> is a measure of the received information contained in the source information. Ideally if there is no noise, then <math> H(R|S) = 0 </math> resulting in <math> I(R,S) = H(S) = H(R) </math>. We want this because this means all the information from the source gets to the receiver! When noise is present <math> H(R|S) \neq 0 </math>, it degrades the quality of our <math> I(R,S) </math> and the information about the receiver contained in the source is now being shared with noise. This only means one thing: ''' we want <math> I(R,S) </math> to be at maximum'''. From the previous discussion, we presented how <math> I(R,S) </math> varies with <math> p </math> and parametrizing the noise probability <math> \epsilon </math>. Figure 2 shows this plot (it's the same image from the last discussion). We can visualize <math> I(R,S) </math> like a pipe that constricts the flow of information depending on the noise parameter <math> \epsilon </math>. Figure 3 GIF shows the pipe visualization. |

| − | [[File:Pipe irs.gif|frame|center|Figure | + | [[File:Pipe irs.gif|frame|center|Figure 3: Pipe visualization for <math> I(R,S) </math>]] |

| − | Let's modify our definition of <math> I(R,S) </math> a bit. In communication theory, <math> I(R,S) </math> is a metric for transmission rate. So the units is really in bits/second. Figure | + | Let's modify our definition of <math> I(R,S) </math> a bit. In communication theory, <math> I(R,S) </math> is a metric for transmission rate. So the units is really in bits/second. Figure 2 demonstrates how much data can be transmitted per second. Interchanging between bits or bits/second might be confusing but for now, when we talk about channels (and also <math> I(R,S) </math>), let's use bits/s. Bits/s is also a measure of bandwidth. From the discussions, it is clear that we can define the '''maximum channel capacity''' <math> C </math> as: |

| − | {{NumBlk|::|<math> C = \max (I(S,R)) </math>|{{EquationRef| | + | {{NumBlk|::|<math> C = \max (I(S,R)) </math>|{{EquationRef|5}}}} |

We can re-write this as: | We can re-write this as: | ||

| − | {{NumBlk|::|<math> C = \max (H(R) - H(R|S)) </math>|{{EquationRef| | + | {{NumBlk|::|<math> C = \max (H(R) - H(R|S)) </math>|{{EquationRef|5}}}} |

| − | Here, <math> C </math> is in units of bits/second. It tells us the maximum number of data that we can transmit per unit of time. Both <math> H(R) </math> and <math> H(R|S) </math> are affected by noise parameter <math> \epsilon </math>. In a way, noise limits <math> C </math>. For example, a certain <math> \epsilon </math> can only provide a certain maximum <math> I(R,S) </math>. Looking at figure | + | Here, <math> C </math> is in units of bits/second. It tells us the maximum number of data that we can transmit per unit of time. Both <math> H(R) </math> and <math> H(R|S) </math> are affected by noise parameter <math> \epsilon </math>. In a way, noise limits <math> C </math>. For example, a certain <math> \epsilon </math> can only provide a certain maximum <math> I(R,S) </math>. Looking at figure 2, when <math> \epsilon = 0</math> (or <math> \epsilon = 1</math>) we can get a maximum <math> I(R,S) = 1</math> bits/s. When <math> \epsilon = 0.25</math> (or <math> \epsilon = 0.75 </math>) we can get a maximum <math>I(R,S) = 0.189 </math> bits/s. These occur when <math> p = 0.5 </math>. We can prove this mathematically. Let's determine <math> C </math> of a BSC given some <math> p </math> and <math> \epsilon </math>. Since we know <math> H(R) </math> follows the Bernoulli entropy <math> H_b(q) </math> where <math> q = p + \epsilon - 2\epsilon p </math>. Then we can write: |

| − | |||

| − | + | <math> H(R) = H_b(q) = -q \log_2(q) - (1-q) \log_2 (1-q) </math> | |

| − | |||

<math> \max(H(R)) = 1 </math> bits when <math> q = 0.5 </math>. Since <math> \epsilon </math> is fixed depending on the channel, we can manipulate the input probability <math> p </math> such that we can force <math> q = 0.5</math> and achieve the maximum <math> H(R) </math>. Hold on to this very important thought. We also know that <math> H(R|S) = H_b(\epsilon) </math>. Unfortunately, since <math> \epsilon </math> is fixed then we can't immediately assume <math> H_b(\epsilon) = 1</math> not unless <math> \epsilon = 0.5 </math>. Therefore we can write <math> C </math> as: | <math> \max(H(R)) = 1 </math> bits when <math> q = 0.5 </math>. Since <math> \epsilon </math> is fixed depending on the channel, we can manipulate the input probability <math> p </math> such that we can force <math> q = 0.5</math> and achieve the maximum <math> H(R) </math>. Hold on to this very important thought. We also know that <math> H(R|S) = H_b(\epsilon) </math>. Unfortunately, since <math> \epsilon </math> is fixed then we can't immediately assume <math> H_b(\epsilon) = 1</math> not unless <math> \epsilon = 0.5 </math>. Therefore we can write <math> C </math> as: | ||

| Line 202: | Line 243: | ||

Leading to: | Leading to: | ||

| − | {{NumBlk|::|<math> C = 1 - H_b(\epsilon) </math>|{{EquationRef| | + | {{NumBlk|::|<math> C = 1 - H_b(\epsilon) </math>|{{EquationRef|6}}}} |

| − | Equation 2 shows how the maximum capacity is limited by the noise <math> \epsilon </math>. It describes the pipe visualization in figure | + | Equation 2 shows how the maximum capacity is limited by the noise <math> \epsilon </math>. It describes the pipe visualization in figure 3. When <math> \epsilon = 0</math>, <math> H_b(\epsilon) = 0 </math> and our BSC can transmit <math> C = 1.0 </math> bits/s of data. When <math> \epsilon = 0.5 </math>, <math> H_b(\epsilon) = 1 </math>, the noise entropy is at maximum and our channel can transmit <math> C = 0 </math> bits/s. Clearly, we can't transmit data this way. Lastly, when <math> \epsilon = 1 </math>, then <math> H_b(\epsilon) = 0 </math> again and we're back to transmitting <math> C = 1</math> bits/s. The most important concept in determining maximum channel capacity is to use equation 5 for any channel model that you may use. |

| − | + | {{Note| Despite discussing a brief example of channel capacity, when it comes to data compression we assume a '''noiseless channel'''. Phew! So much for going through the hard efforts though. |reminder}} | |

| + | ==Huffman Coding== | ||

| − | + | As a nice sneak peak into data compression, let's look into '''Huffman coding'''. | |

| − | |||

| − | |||

| − | |||

Revision as of 23:10, 1 March 2022

Contents

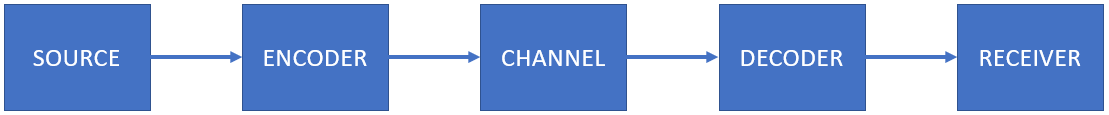

Complete Channel Model

Previously, we showed a simple channel model that consists of the source, channel, and receiver. In modern communication systems, we also add an encoder after the source and a decoder before the receiver. Figure 1 shows the complete channel model. The encoder is used to transform the source symbols into a code that suitable for the channel. The receiver decodes the received message coming out of the channel.

Let's bring back Bob and Alice into the scene again. This time let's use the complete channel model. Since most of our communication systems use binary representations, Bob needs to encode his data into binary digits before he sends it over the channel.

Let's look at a few examples. Suppose our source alphabet has the symbols of all combinations of flipping three fair coins. Then our symbols would be . Bob needs to map each symbol into a binary representation such that . This is the encoding process. Let's add new definitions:

- The binary set are the code symbols.

- The encoded patterns are called the codewords. For example, is the codeword for the symbol . is the codeword for . In practice, we let some random variable whose outcomes are codewords associated with probabilities . For example, is the codeword for . Moreover, from our example, the source symbols are equiprobable and we have a direct mapping from source symbol to codeword (i.e., ) then it follows that the codewords are equiprobable too: . The probability distribution of can change depending on the encoding scheme.

- We usually tabulate the encoding or mapping. We call this table the codebook. It's a simple look-up-table (LUT). Let's call the table below as codebook-1.

| Source Symbol | Codeword |

|---|---|

| TTT | 000 |

| TTH | 001 |

| THT | 010 |

| THH | 011 |

| HTT | 100 |

| HTH | 101 |

| HHT | 110 |

| HHH | 111 |

The encoder looks at this table and endcodes message before it gets into the channel. For example, suppose we want to send the message . The encoder uses codebook-1 to translate the source message into an encoded message: . Receivers should also know the same codebook-1 so that they can decode the message. For example, if the encoded message is , then we can decode the message as . Choosing the encoding where the and is simple. This encoding is arbitrary and it seems convenient for our needs. However, nothing stops us from encoding the source symbols with a different representation. For example, we can choose an encoding where and . We can go for more complex ones like codebook-2 as shown below:

| Source Symbol | Codeword |

|---|---|

| TTT | 01 |

| TTH | 001 |

| THT | 0001 |

| THH | 00001 |

| HTT | 000001 |

| HTH | 0000001 |

| HHT | 00000001 |

| HHH | 000000001 |

Where a source message translates into . Codebook-2 can also decode an encoded message. For example, when we receive we get . The choice of the encoding process is purely arbitrary. However, we are interested if the encoding efficient. One metric is the average code length defined as:

-

(1)

-

Where is the code length of the codeword . For example, the average code length of codebook-1 is:

The average code length of codebook-2 is:

Clearly, codebook-1 has a shorter codelength than codebook-2. Let's introduce another metric called the coding efficiency. Coding efficiency is defined as:

-

(2)

-

Where is the source entropy. The coding efficiency has a range of . If you recall our "go-to" interpretation of entropy, given some entropy means we can represent outcomes. Essentially, is the minimum number of bits we can use to represent all outcomes of a source regardless of its distribution. Of course, a smaller coding length is desirable if we want to compress representations. For our simple coin flip example, . The efficiency of codebook-1 is . Codebook-1 is said to be maximally efficient because it matches the minimum number of bits to represent the source symbols. The efficiency of codebook-2 is .

Channel Rates and Channel Capacity

Let's bring back Bob and Alice into the scene again. When Bob sends his file to Alice, it takes a while before she gets the entire message because the channel has limited bandwidth. Bandwidth is the data rate that the channel supports. Let's define as the maximum channel capacity in units of bits per second (bits/s). We'll provide a quantitative approach later. Channels have different rates because of their physical limitations and noise. For example, an ethernet bandwidth can achieve a bandwidth of 10 Gbps (gigabits per second), while Wifi can only achieve at a maximum of 6.9 Gbps. It really is slower to communicate wirelessly. It is possible to transmit data to the channel at a much slower rate such that we are not utilizing the full capacity of the channel. We'd like to encode our source symbols into some codewords that utilizes the full capacity of a channel. Shannon's source coding theorem says:

"Let a source have entropy (bits per symbol) and a channel have a capacity (bits per second). Then it is possible to encode the output of the source in such a way as to transmit at the average rate symbols per second over the channel where is arbitrarily small. It is not possible to transmit at an average rate greater than ." [1]

Take note that, in Shannon's statement is not the noise probability that we know. It's just a term that is used to indicate a small margin of error. In a nutshell, Shannon's source coding theorem says that we can calculate a maximum symbol rate (symbols/s) that utilizes the maximum channel rate while knowing the source entropy . This maximum symbol rate is given by:

-

(3)

-

Let's look at a few examples to appreciate this.

Channel Rate Example 1: Three Coin Flips

Let's re-use the three coin flips example again. Suppose we want to send the three coin flip symbols over some channel that has a channel capacity bits/s. We also know (from the above example) that we need a minimum of bits per symbol to represent each outcome. This translates to:

If we are to utilize the maximum capacity of the channel, we need to encode the source symbols to its binary representation such that each binary digit in each codeword provides 1 bit of information [2]. Luckily, codebook-1 does this because we have a coding efficiency of which means that every binary digit of the codeword provides at least 1-bit of information. We are maximally efficient with this encoding. If you think about it, if we send 1 symbol/s to the channel and our encoding has 1 symbol (e.g., TTT) is 3 bits long then essentially we are able to send 3 bits/s to the channel. Since bits/s, then we utilized the entire maximum channel capacity.

Channel Rate Example 2: A 6-sided Die

This time, suppose we want to send the symbols of a fair 6-sided die on a channel that only has bits/s. Such that has outcomes . Of course, all outcomes are equiprobable with . From here, we can determined bits/symbol. Therefore:

Again, Shannon says that with the given and we can only transmit at a maximum symbol rate of symbols/s. Now, let's think of an encoding scheme that suits our needs. For convenience, to represent 6 unique outcomes, we can use 3 bits to represent each outcome. Let's say we have codebook-3:

| Source Symbol | Codeword |

|---|---|

| 1 | 000 |

| 2 | 001 |

| 3 | 010 |

| 4 | 011 |

| 5 | 100 |

| 6 | 101 |

The coding efficiency for codebook-3 and for the 6-die example is:

This is not that efficient but it will suffice for now. Here, let's introduce the effective symbol rate due to the new encoding:

-

(4)

-

Our encoding scheme affects how much data we're putting into the channel. If our coding efficiency isn't optimal then we are only utilizing a fraction of the maximum capacity . Hence we needed the effective channel transmission rate incorporated into our effective symbol rate . Calculating for the encoding in codebook-3 would give:

symbols/s.

Observe that . We are not even close to the maximum rate. Can we find a better encoding? How about this, suppose we create a composite symbol that consists of the three 6-sided die symbols. For example, we can let be one symbol, or we can let be another symbol. If we do so, then we have around unique possible outcomes for the new set of symbols. To represent 216 outcomes we need a minimum of 8 bits of codeword length (because ). So we can have codebook-4 as:

| Source Symbol | Codeword |

|---|---|

| 111 | 0000_0000 |

| 112 | 0000_0001 |

| 113 | 0000_0010 |

| ... | ... |

| 665 | 1101_0110 |

| 666 | 1101_0111 |

The underscores are just there to separate the 4 bits for clarity. Codebook-4 looks really long but in practice, that's still small. So what changes? Since we have 8 bits per 3 symbols, we effectively have . Take note that all outcomes are equiprobable so dividing the 8 bits by 3 symbols is enough. This results in a coding efficiency of:

Cool! We're much closer to being maximally efficient. Now, let's compute the new for codebook-4:

symbols/s.

Superb! The new is close to the maximum rate of . The key trick was to create a composite symbols that consists of 3 of the original source symbols, then represent them with 8 binary digits of codewords.

Maximum Channel Capacity

This time, let's look into how we can quantitatively determine the maximum channel capacity. In the last module, we comprehensively investigated the measures of , , and on a BSC. Recall that:

If you think about it, is a measure of channel quality. It tells us how much we can estimate the input from an observed output. In English, we said that is a measure of the received information contained in the source information. Ideally if there is no noise, then resulting in . We want this because this means all the information from the source gets to the receiver! When noise is present , it degrades the quality of our and the information about the receiver contained in the source is now being shared with noise. This only means one thing: we want to be at maximum. From the previous discussion, we presented how varies with and parametrizing the noise probability . Figure 2 shows this plot (it's the same image from the last discussion). We can visualize like a pipe that constricts the flow of information depending on the noise parameter . Figure 3 GIF shows the pipe visualization.

Let's modify our definition of a bit. In communication theory, is a metric for transmission rate. So the units is really in bits/second. Figure 2 demonstrates how much data can be transmitted per second. Interchanging between bits or bits/second might be confusing but for now, when we talk about channels (and also ), let's use bits/s. Bits/s is also a measure of bandwidth. From the discussions, it is clear that we can define the maximum channel capacity as:

-

(5)

-

We can re-write this as:

-

(5)

-

Here, is in units of bits/second. It tells us the maximum number of data that we can transmit per unit of time. Both and are affected by noise parameter . In a way, noise limits . For example, a certain can only provide a certain maximum . Looking at figure 2, when (or ) we can get a maximum bits/s. When (or ) we can get a maximum bits/s. These occur when . We can prove this mathematically. Let's determine of a BSC given some and . Since we know follows the Bernoulli entropy where . Then we can write:

bits when . Since is fixed depending on the channel, we can manipulate the input probability such that we can force and achieve the maximum . Hold on to this very important thought. We also know that . Unfortunately, since is fixed then we can't immediately assume not unless . Therefore we can write as:

Leading to:

-

(6)

-

Equation 2 shows how the maximum capacity is limited by the noise . It describes the pipe visualization in figure 3. When , and our BSC can transmit bits/s of data. When , , the noise entropy is at maximum and our channel can transmit bits/s. Clearly, we can't transmit data this way. Lastly, when , then again and we're back to transmitting bits/s. The most important concept in determining maximum channel capacity is to use equation 5 for any channel model that you may use.

Huffman Coding

As a nice sneak peak into data compression, let's look into Huffman coding.